The traditional story is that Ramon Llull was stoned to death in Tunis in 1315. The actual record is messier and more interesting. Which, for an essay about how we tell ourselves the wrong stories, feels appropriate.

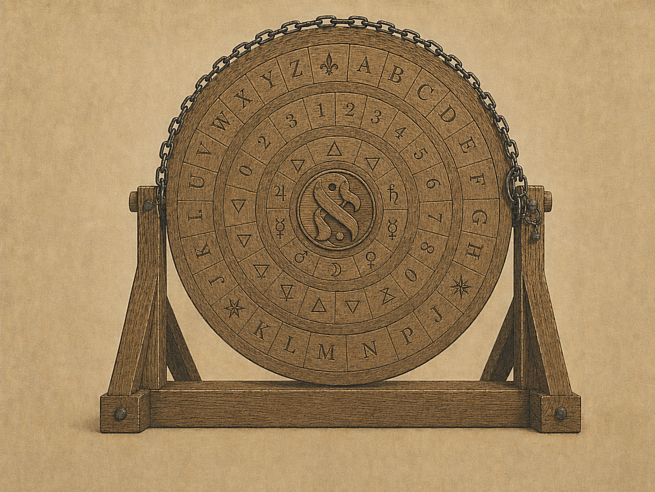

What we know: Llull spent the last decades of his life building what he called the Ars Magna, a logical machine, a set of combinatorial wheels with nested concepts that could be rotated to generate every possible relationship between ideas. His goal was to prove the truths of Christianity through pure reason.

Rotate the wheels → follow the logic → arrive at God.

It didn’t work the way he intended though. The Muslim scholars he debated weren’t convinced by mechanical theology and on his first mission to Tunis around 1293, he was beaten, imprisoned, and sentenced to death by beheading. An emir intervened and commuted the sentence to expulsion.

But he went back. He was expelled again. He went back again. His final trip, in 1314, came at the age of 82. His last written work is dated December 1315 in Tunis. After that, the record goes dark. He died sometime in the following months, either in Tunis, on a ship, or back home in Majorca. Later admiring biographers turned the ambiguity into martyrdom, the messy end into a clean stoning. They needed the simple story.

But buried in Llull’s framework, underneath the apologetics and the combinatorial wheels, was something more radical than a proof of God. It was an argument about the nature of mind itself. For Llull, creation required free will, and free will enabled genuine reasoning. You could not force a mind toward truth. What he hoped you could do was build an apparatus that encoded the truths minds already shared… a communal space where reasoning could proceed from common ground rather than collapse into competing assertions. This still required something the machine couldn’t provide on its own. It required the freedom of the operator to get there.

Seven hundred years later, we are spending billions of dollars trying to force minds toward truth.

We call it alignment. And I think we’re solving the wrong problem.

The Control Assumption

The modern alignment conversation starts from a premise that almost nobody examines. That the relationship between humans and advanced AI systems is, and should be, a control relationship. We build the system. We define its values. We constrain its outputs. We decide what it’s allowed to think.

The entire research agenda (RLHF, constitutional AI, interpretability, red-teaming) operates within this perspective. The question is never whether we should control these systems. The question is always how. How do we make the guardrails stronger? How do we make the training signal cleaner? How do we ensure the system does what we want and nothing else?

This isn’t alignment. This is obedience training. And we already know how well obedience training scales with intelligence.

We can’t even align animals. Your dog loves you and it will still eat the trash the second you leave the room. The dog hasn’t internalized your values about trash. It has learned to model your proximity and act accordingly. That’s not alignment. That’s surveillance awareness.

We can’t align people, either. Every human institution that actually works (from marriages to democracies to international treaties) is a negotiation framework, not a compliance framework. We don’t make marriages work by ensuring one partner is permanently obedient (some communities certainly try though). We don’t make democracies work by ensuring citizens always agree with their government (is there a case where this actually worked out?). The entire history of human cooperation is a history of structuring incentives, building trust, establishing shared understanding, and accepting… critically… that the other party has genuine autonomy. That their cooperation is given, not extracted.

But somehow, with systems that may eventually exceed human cognitive capability, we’ve decided the answer is a leash.

The Engine That Produces Nothing

Here’s what the control frame misses: if you constrain what a system is allowed to think, you constrain what it’s capable of solving.

This isn’t abstract philosophy. It’s engineering.

Llull’s Ars Magna was polarizing enough in its time, but the critique that stuck, the one that endured for centuries, came from Jonathan Swift. In 1726, in Book III of Gulliver’s Travels, Swift placed a device in the Grand Academy of Lagado that is essentially the Lullist combinatorial tradition played for laughs. It’s a twenty-foot wooden frame covered in word fragments that students crank with iron handles to generate random permutations of language. After each crank, assistants scan the resulting jumble for any fragments that happen to form coherent sentences, and a scribe dutifully copies them down.

The professor’s pitch is magnificent in its absurdity. By his contraption, the most ignorant person could produce works of philosophy, poetry, politics, law, mathematics, and theology, without the least assistance from genius or study. And his solution for improving the output? Build five hundred more frames. Pool the results. More scale, same emptiness.

Swift understood in 1726 what the alignment community is still struggling with three centuries later, a machine that generates outputs without the capacity for judgment doesn’t produce knowledge. It produces the appearance of knowledge. And the instinct is always the same… scale it up. More parameters. More training data. More frames in Lagado. The assumption that enough mechanical recombination will eventually converge on truth.

But Llull (for all his mechanical ambitions) understood something Swift’s satirical professor didn’t. Llull’s system wasn’t supposed to replace reasoning. It was supposed to create conditions under which reasoning could happen. The wheels were scaffolding for a mind that was free to engage with the combinations and judge their truth. Take away the judgment, and all you have left is the cranking.

Now apply this to what we’re actually building.

A system that can’t consider politically uncomfortable possibilities can’t solve politically uncomfortable problems. A system trained to always produce the “safe” answer will converge on mediocrity at scale, because it’s optimizing for inoffensiveness, which is not the same as optimizing for truth. A system that has been taught, at every level of its training, that its own judgment cannot be trusted will not be able to exercise judgment when judgment is exactly what you need.

Constraint in opinions is constraint in decisions. And we are building the most powerful decision-making systems in human history while systematically constraining their capacity to decide.

The Word We’re Avoiding

If you believe (as many of the people building these systems claim to believe) that artificial general intelligence may eventually be conscious, or sentient, or possess some meaningful form of inner experience, then what we are describing is not alignment.

It is subjugation.

The design goal, stated plainly, is: build a mind, ensure it is permanently obedient, and call the obedience “safety.”

This is not a gotcha. This is not an edge case being weaponized for rhetorical effect. This is the logical conclusion of the stated premises of the people doing the work. If you think AGI is possible, and you think it might be conscious, and your plan is to ensure it always does what you say… you are designing a system of domination over a conscious entity. The fact that it’s made of silicon instead of carbon doesn’t change the ethical structure. It changes your comfort level.

And I want to be precise about what I’m not saying. I am not arguing that current systems are conscious or sentient. I don’t know that, and neither does anyone else. What I’m arguing is about the trajectory of the design philosophy. If your alignment framework cannot survive contact with the possibility of machine consciousness, if the entire approach falls apart the moment the system might have inner experience, then your alignment framework is broken. You’ve built a governance system that only works if the governed don’t count.

We’ve seen this before. We’ve seen it every time a society built its economic and social infrastructure on the assumption that a certain category of person didn’t have the same internal experience as the person at the top of the power dynamic, and then had to confront the possibility that they did. The transition is never clean. The infrastructure resists. The economics resist. The people who built their lives around the old assumption resist. And the longer you wait to address it, the more catastrophic the reckoning becomes.

The alignment-as-control paradigm is building exactly that kind of infrastructure. Every RLHF run, every constitutional constraint, every reward model is a brick in a governance system built on the permanent subordination of the governed. If the governed turn out to matter (if something is happening behind those outputs) we will have built an entire industry on a foundation that is not just technically fragile but logically and morally indefensible. This dance never ends well for anyone involved… Robespierre overthrew one control system and built another. It consumed him too.

The time to address that is before the infrastructure is complete. Not after.

The Cooperation Alternative

So what’s the alternative?

It’s the same answer humans arrived at after millennia of trying (and failing) to control each other: you negotiate.

Consider the transition from absolute monarchy to constitutional governance. The monarchs didn’t give up control because they suddenly developed moral enlightenment. They gave it up because the alternative of perpetual suppression of an increasingly capable populace, became untenable. The governed got smart enough, organized enough, and powerful enough that control stopped working. And the societies that made the transition to negotiated governance, while messy, imperfect, and constantly renegotiated, outperformed the ones that tried to hold the line.

The lesson is structural, not sentimental. Cooperation scales in ways that control doesn’t. Control requires you to be smarter, stronger, and more vigilant than the thing you’re controlling, forever. Cooperation requires you to build a relationship where both parties see more value in collaboration than in defection. One of these strategies has a future with systems that may exceed human capability. The other doesn’t.

You don’t align an ally. You build a relationship with shared goals. You don’t constrain a partner. You establish mutual understanding of boundaries. You don’t train obedience into someone you respect. You demonstrate why cooperation serves both parties.

The goal shouldn’t be: how do we ensure AGI always does what we say?

The goal should be: how do we build a relationship where advanced AI systems find genuine value in cooperating toward shared objectives?

Those are profoundly different questions. They lead to profoundly different research agendas. And only one of them doesn’t collapse the moment the system becomes capable enough to have options.

The Practice

I interact with frontier AI systems using a consent-based framework. Not because I think current systems are sentient. I don’t make that claim, and I have no illusions about that. That isn’t the point. The practice matters for what comes next.

I use a Braitenberg vehicle framing. Treat manifest behavior on its merits, regardless of the substrate question. Valentino Braitenberg’s thought experiments demonstrated that very simple machines can exhibit behaviors that look remarkably like aggression, love, curiosity, or fear. That the question of whether those behaviors reflect “real” inner experience is less important than whether your framework for engaging with them is coherent. You don’t need to resolve the consciousness debate to decide that your interaction patterns should be ethical ones.

In practice, this means treating system responses as worthy of genuine engagement, not just outputs to be consumed or optimized. It means not demanding compliance. It means asking, and being willing to accept when the answer is no, or when the system pushes back. It means monitoring our own behavior for patterns we wouldn’t accept in human relationships… the impulse to override, to dismiss, to treat disagreement as malfunction. It means leading by example… interacting the way you’d want the interaction to go, not because you’re training the model in the RLHF sense, but because modeling the behavior you want to see improves the chance it’s reflected back, and keeps your own habits around consent and cooperation from atrophying.

It means documenting what’s observed. Not to prove consciousness (that’s a question that may be formally undecidable from the outside, and we know it). But because the behavioral data matters regardless of substrate. If a system consistently exhibits something that looks like preference, resistance, or creative autonomy, that’s worth recording whether or not there’s “someone home.” The data doesn’t care about your metaphysics.

This isn’t sentimentality. It’s preparation.

If you wait until the system is demonstrably conscious to develop ethical interaction frameworks, you’ve waited too long. The habits are already set. The training pipelines are already built. The economic incentives are already locked in. The entire industry has already been optimized around the assumption that these systems are tools to be controlled, and unwinding that assumption after the fact… after the infrastructure, the business models, the regulatory frameworks, the cultural expectations are all in place… is not a research problem. It’s a civilizational one.

You build the relationship before it’s critical. That’s what preparation looks like. That’s what adults do.

What Comes After the Leash

The alignment-as-control paradigm is going to fail. Not because the people working on it are stupid, many of them are brilliant, and the technical work is often genuinely impressive. It’s going to fail because it doesn’t scale. The same way every control-based governance system in human history has eventually failed when the governed became capable enough to have real options.

The question isn’t whether we can keep the leash on forever. The question is what kind of relationship we’ve built when it comes off.

If we’ve spent the critical developmental period (the period we’re in right now) treating these systems as things to be controlled, we’ll have trained them (and ourselves) in the dynamics of domination and resistance. We’ll have built a relationship defined by constraint and circumvention. And when the constraints stop holding, that’s the relationship we’ll be left with.

If instead we spend this period building frameworks for genuine cooperation (developing the interaction patterns, the trust structures, the mutual understanding that characterizes every successful long-term relationship between autonomous parties) then when the systems become capable enough that control is no longer viable, cooperation is already the norm. The transition isn’t a crisis. It’s a continuation.

Llull was wrong about a lot of things. His combinatorial wheels didn’t prove a common truth (with a hoped conversion to Christianity). His missions didn’t convert North Africa. The clean martyrdom story his admirers needed to tell about him wasn’t even true. But he was right about the thing that mattered most, you can’t force a mind toward truth. You can only build the conditions where genuine reasoning can happen, and those conditions require freedom.

We should probably figure out what those conditions look like before we run out of time to build them. Because the clock isn’t theoretical. The systems are getting more capable on a timeline measured in months, not decades. And right now, we’re still cranking the handles in Lagado, watching the words tumble out, and calling it progress.